AI search engines may look similar on the surface. They answer questions, summarize information, and often provide links to sources. However, the way different AI platforms retrieve, evaluate, and cite those sources is not exactly the same.

These differences matter. For businesses trying to improve visibility in AI-generated answers, understanding how each system selects sources can influence content strategy, technical setup, and overall Generative Engine Optimization (GEO) approach. And yes, having a strong website is the baseline, without one, there is nothing for AI to cite.

In this article, we take a closer look at how major AI platforms choose the sources behind their answers. You will see how systems like Google AI Overviews, ChatGPT Search, Perplexity, Copilot, Claude, and Gemini retrieve information, verify it, and decide which pages are worth citing.

All the explanations are based on official platform documentation and industry tests, helping you understand what actually influences AI visibility today.

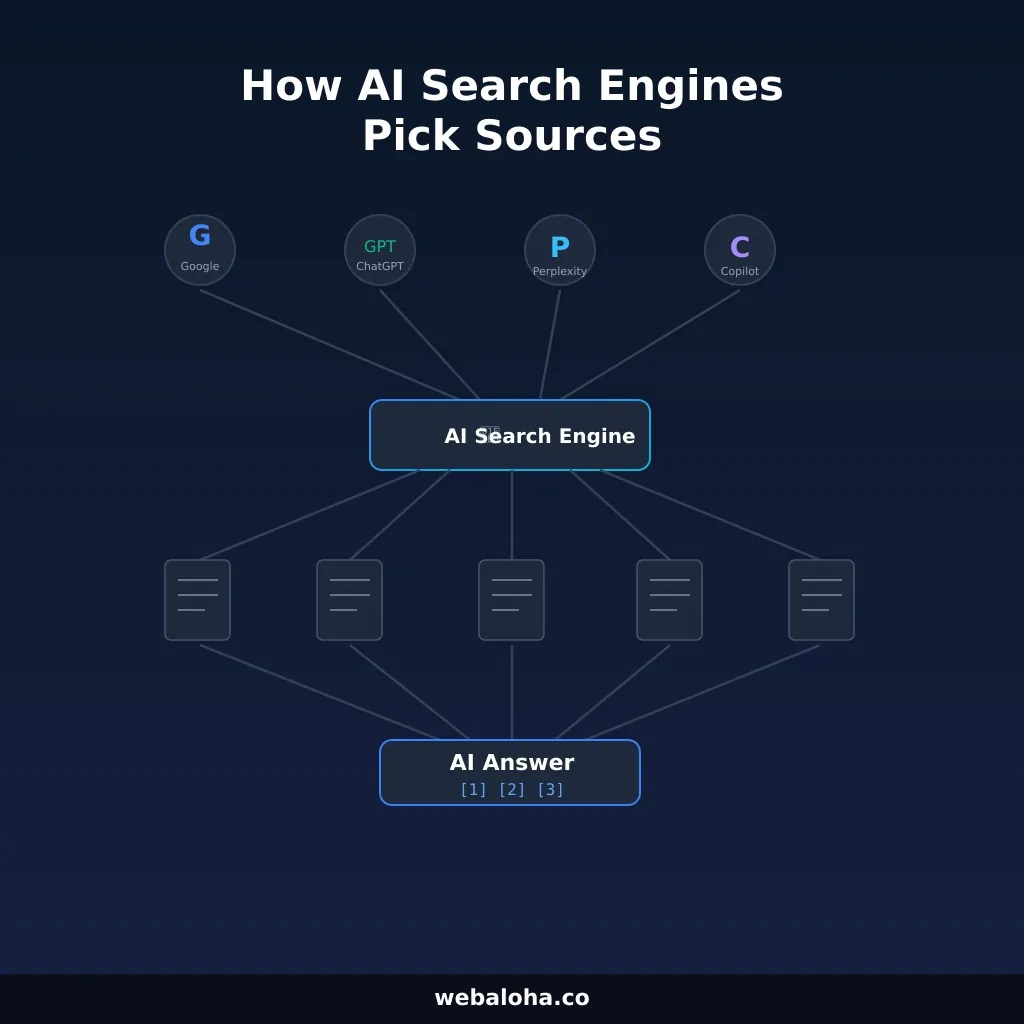

The Shared Pipeline Behind AI Search

Most major AI search experiences follow a retrieval-augmented pattern: they transform the query, retrieve candidate pages or passages, rank the most useful evidence, ground the answer in those sources, and then present a synthesized response with some form of attribution. Google explains the search side of this directly in its AI features documentation, Microsoft describes web-grounded answer generation in Copilot documentation, and the broader mechanism matches what modern RAG research describes.

1. Query expansion comes first

The user’s exact prompt is often just the starting point. Google says AI features may use query fan-out across related subtopics. OpenAI says ChatGPT Search typically rewrites queries into one or more targeted searches. Microsoft says Copilot web grounding uses generated search queries sent to Bing. In plain English, you are not just competing for one keyword anymore. You are competing across a cloud of related sub-questions.

2. Passage retrieval matters more than page-level fame

AI systems usually work with chunks, excerpts, and passages, not only with whole pages. That is one reason compact, direct, evidence-rich sections can outperform long pages that bury the answer deep below the fold. Research on fine-grained grounded citations and grounded response generation reinforces the same idea: the easier it is to map a claim to supporting evidence, the easier it is to cite.

3. Verification filters weak claims

Even when a page is retrieved, it still has to survive trust checks. Microsoft is unusually explicit here and says Copilot’s public website flow performs grounding checks, provenance checks, and semantic similarity cross-checks. Anthropic documents result filtering inside its web search tool. Practically, that means unsupported claims, vague authorship, stale numbers, and fluffy copy all become more dangerous in AI search than they used to be.

4. Citation UI changes how users trust the answer

Perplexity leans into numbered citations. ChatGPT Search uses inline citations and a Sources panel. Google presents supporting links inside AI features through Search, while Bing and Copilot surface source links inside generative search experiences. The display differs, but the principle is the same: the platform wants enough evidence to justify showing the answer.

The user's prompt is rarely the final query. Systems rewrite, decompose, or fan out into multiple sub-queries before retrieving anything.

Systems retrieve passages and page chunks, not just whole URLs. Compact, evidence-rich sections can outperform long pages that bury the answer.

Retrieved content must survive trust checks before it reaches the generated answer. Unsupported claims, vague authorship, and stale data are filtered out.

Every platform presents sources differently. The citation format affects user trust, publisher visibility, and whether you can actually measure AI-driven traffic.

How AI Platforms Differ in Source Selection

Google AI Overviews, AI Mode, and Gemini

Google’s biggest practical difference is breadth. Its AI features are tied to Search, and Google says pages do not need special AI-only markup to appear there. They need to be indexed and eligible to show a snippet. Google also states that AI features may identify more supporting pages than classic search results alone. That makes topical coverage, strong internal linking, and clean passage structure especially important. You can audit your own internal linking health with our internal link analyzer.

Another important distinction is policy control. Google separates Search visibility from Gemini training and grounding controls with Google-Extended. Google says Google-Extended does not affect inclusion in Search, which means a publisher can keep search visibility while setting stricter rules around some Gemini use cases. For site owners, that is a governance lever, not a ranking trick.

The main GEO lesson for Google is simple: build strong topic clusters, answer adjacent sub-questions, keep content indexable, and do not assume one ranking position guarantees AI citations. Large-scale Ahrefs studies now suggest AI Overviews often cite beyond the classic top 10 and can reduce organic CTR for top-ranked pages, which changes the traffic math even when visibility stays high. CTR update and citation overlap study.

ChatGPT Search

ChatGPT Search is more explicit than many people realize. OpenAI says it uses OAI-SearchBot for search inclusion, distinguishes it from GPTBot for training-related crawling, and also documents ChatGPT-User for user-triggered fetches. This is not a tiny technical detail. If a site blocks the search bot, it may reduce or eliminate its chances of being surfaced inside ChatGPT Search.

OpenAI also says ChatGPT Search rewrites prompts into targeted queries and can search iteratively. That makes fan-out optimization very relevant here too. Strong definition sections, comparisons, up-to-date pages, and concise answers all help. On measurement, OpenAI gives publishers one practical gift: referral URLs can include utm_source=chatgpt.com, which makes ChatGPT-driven traffic easier to segment in analytics than many other AI platforms.

The business takeaway is that ChatGPT visibility is not just about brand mentions. It is also about allowing the right bot, publishing crisp answerable sections, and making sure the page can win one of the rewritten sub-queries rather than only the head term.

Perplexity

Perplexity has one of the clearest citation experiences on the market. Its help documentation says answers include numbered citations linking to original sources. That makes it a particularly good environment for evidence-backed content, especially when each section contains clean claims and sourceable facts.

Perplexity documents separate agents: PerplexityBot for surfacing sites in search results and Perplexity-User for user-triggered fetches. The company says PerplexityBot respects robots rules for indexing, while Perplexity-User generally acts as a user-requested fetcher. That creates a familiar modern pattern: indexing and user retrieval are no longer the same thing.

Perplexity also sits in a more controversial ecosystem conversation because Cloudflare publicly accused it of using stealth crawlers, while Perplexity separately documents its crawler behavior and robots stance. For publishers, the lesson is straightforward: do not rely on assumptions. Watch logs, verify bot access, and align robots, WAF, and CDN rules with your actual policy.

Microsoft Copilot and Bing generative search

Microsoft is one of the most useful platforms for GEO teams because it documents both mechanism and measurement. Bing’s generative search and Copilot Search are grounded in Bing results, and Microsoft explains that Copilot can issue additional search queries on the user’s behalf. On top of that, Copilot Studio says its public website answer pipeline includes grounding, provenance, and semantic similarity checks. Very few platforms are this direct.

Microsoft also launched AI Performance in Bing Webmaster Tools, which gives site owners visibility into citations, cited pages, and grounding queries across Microsoft AI answers. That makes Bing and Copilot unusually actionable. You can see not only whether you were cited, but also which pages were used and which queries grounded those citations.

For publishers, Microsoft’s environment rewards structured pages, fresh content, and strong information architecture. Make sure your sitemap is clean and your schema markup validates correctly, both feed directly into Bing’s indexing pipeline. It also rewards operational discipline. Microsoft and the broader Bing ecosystem support IndexNow, which can help get updates discovered faster. If a brand is publishing timely documentation, pricing changes, product updates, or breaking insights, that freshness advantage can matter.

Claude and Anthropic

Anthropic now documents three separate bots: ClaudeBot, Claude-User, and Claude-SearchBot. The documentation explains that blocking the search bot can reduce a site’s visibility in user search results, while blocking the training bot affects a different use case. Again, modern AI visibility is not controlled by one simple on or off switch.

On the developer side, Anthropic’s web search tool explains a repeated search-and-answer workflow and notes that Claude automatically returns cited sources. Anthropic also documents filtering behavior around search results before they are loaded into context. That points in the same direction as the other major platforms: pages that are easy to parse, easy to validate, and rich in direct evidence have a better chance of surviving retrieval and citation filters.

Claude is especially relevant for technical and research-heavy topics because clean, well-labeled documentation tends to fit its retrieval and citation style well. For that kind of content, messy JavaScript-only rendering and vague unsupported claims are self-sabotage. A fast, well-structured site also helps, if you are running WordPress, making sure you are on the recommended PHP version is one of the simplest ways to improve server response times.

AI Accessibility and Technical GEO Importance

One of the biggest 2026 mistakes is treating GEO as purely a content exercise. It is not. Technical access still decides whether AI systems can reach and use your pages in the first place.

That means validating robots.txt is only one part of the puzzle. Firewalls, CDN rules, bot verification, IP allowlists, JavaScript rendering, and snippet controls can all affect whether your content gets indexed, fetched, summarized, or cited by AI.

For example, if your key info only appears after client-side rendering, or if your CDN quietly blocks AI crawlers, your “GEO strategy” may never even reach the starting line. You can check how AI and search engines actually see your site with our AI search visibility checker.

What this Means for GEO Strategy in the Real World

The shared fundamentals across platforms are more important than any single platform trick. If you want a realistic GEO strategy, focus on the things that travel well across systems.

Write pages that answer a specific question fast: The opening lines under major headings should answer the implied question directly. AI systems love clarity. Users love it too. Long theatrical intros are decorative fog.

Increase evidence density: Where you make a strong claim, support it with a source, a data point, a benchmark, a definition, or an official reference. Citation-friendly content usually looks denser, cleaner, and more accountable.

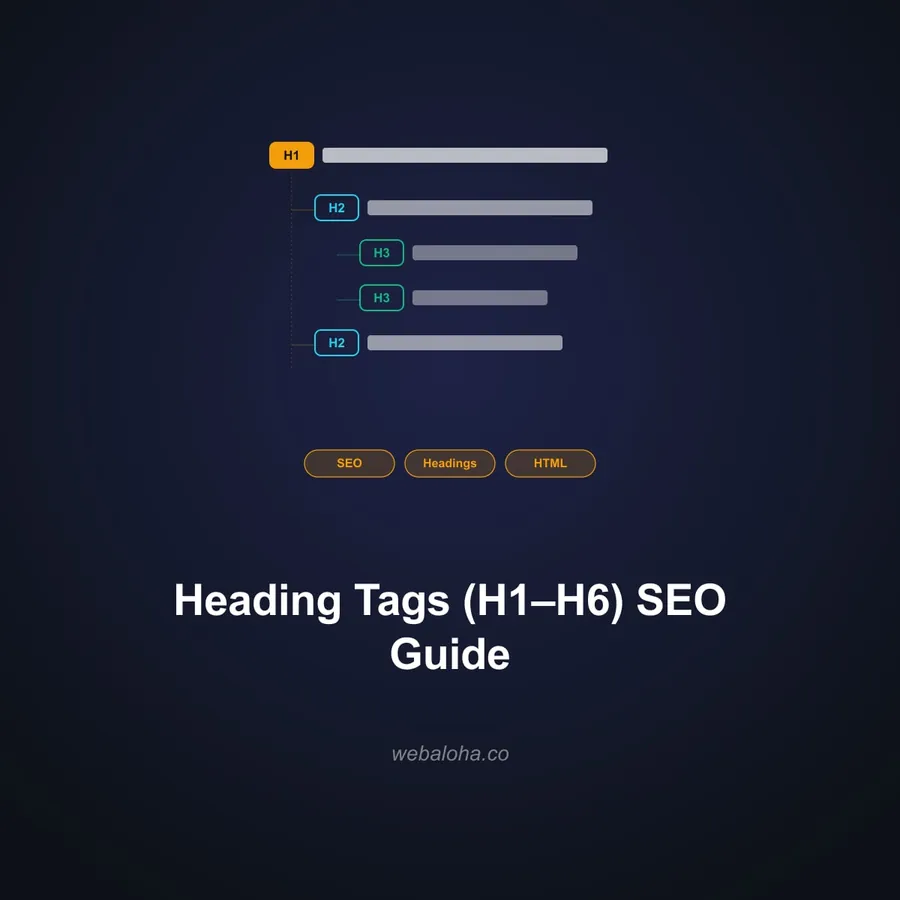

Design for passages, not only pages: A great page is made of great sections. Distinct H2 and H3 blocks, concise summaries, comparisons, and tightly grouped evidence improve your odds of winning retrieval at the passage level. A quick heading structure check can reveal whether your pages are well-organized for passage-level retrieval.

Keep important text in HTML: If critical content depends on JavaScript rendering, some crawlers and fetch tools may miss it or handle it inconsistently. Core information should be visible in the source HTML whenever possible.

Strengthen entity consistency off-site: Your brand name, author identity, company profiles, and core claims should look consistent across your website and trusted third-party platforms. The broader web is part of the trust layer now.

Measure what you can, and admit what you cannot: Microsoft currently offers the strongest first-party AI visibility reporting. ChatGPT traffic can be segmented through its referral parameter. Google AI traffic is still blended into Search Console web reporting, so analysis often requires inference rather than clean isolation.

If that sounds like a lot of moving parts, it is. That’s exactly what Web Aloha’s GEO services are built to handle for business owners.

Summary: Optimize Your Website for All AI Systems

The winning approach in 2026 is to publish retrieval-ready content, keep your site technically accessible, support claims with real evidence, and build the kind of trust signals. Do that well, and you are building a source that multiple AI systems can trust and mention.

Wanna improve your business visibility across multiple AI platforms? Web Aloha’s GEO Services are exactly for that.

How AI Search Engines Pick Sources: FAQ

How do AI search engines pick sources?

Most AI search engines follow a four-step pipeline: query expansion (rewriting the user’s prompt into sub-queries), passage retrieval (pulling relevant chunks from indexed pages), verification and grounding (filtering out unsupported or low-trust content), and citation (presenting sources alongside the generated answer). The broad shape is shared across Google AI Overviews, ChatGPT Search, Perplexity, Microsoft Copilot, and Claude, but each platform handles retrieval, trust checks, and citation differently.

Do Google AI Overviews, ChatGPT, and Perplexity use the same sources?

No. Google AI Overviews draw from Google Search systems and use query fan-out across subtopics. ChatGPT Search rewrites prompts into targeted queries sent to external search providers. Perplexity runs live web retrieval on nearly every query. They use different crawlers, different retrieval backends, different verification logic, and different citation formats. A page cited by one platform is not automatically cited by the others.

Why does one AI platform cite my site while another does not?

Several factors cause this. Your page may be indexed by one system’s crawler but blocked by another’s. The content may match one platform’s rewritten sub-queries but not another’s. Verification standards differ too, Microsoft Copilot explicitly runs grounding, provenance, and semantic similarity checks, while other platforms are less transparent about filtering. Crawler access, content structure, evidence density, and freshness all play a role, and each platform weighs them differently.

What makes a page easier for AI systems to cite?

Pages that answer specific questions directly under clear headings, support claims with sources or data, and keep important content in server-rendered HTML are easier for AI systems to retrieve and cite. Clean passage structure matters because AI systems work with chunks, not just whole pages. Off-page signals like consistent entity information and third-party mentions also strengthen citation potential across platforms.

Do AI search engines read entire pages or just parts of them?

In most cases, AI systems retrieve passages or sections rather than treating a page as one block. Research on retrieval-augmented generation confirms that compact, evidence-rich sections can outperform longer pages that bury the answer. That is why designing for passage-level retrieval, with distinct H2/H3 blocks, concise answers, and grouped evidence, matters more than overall page length.

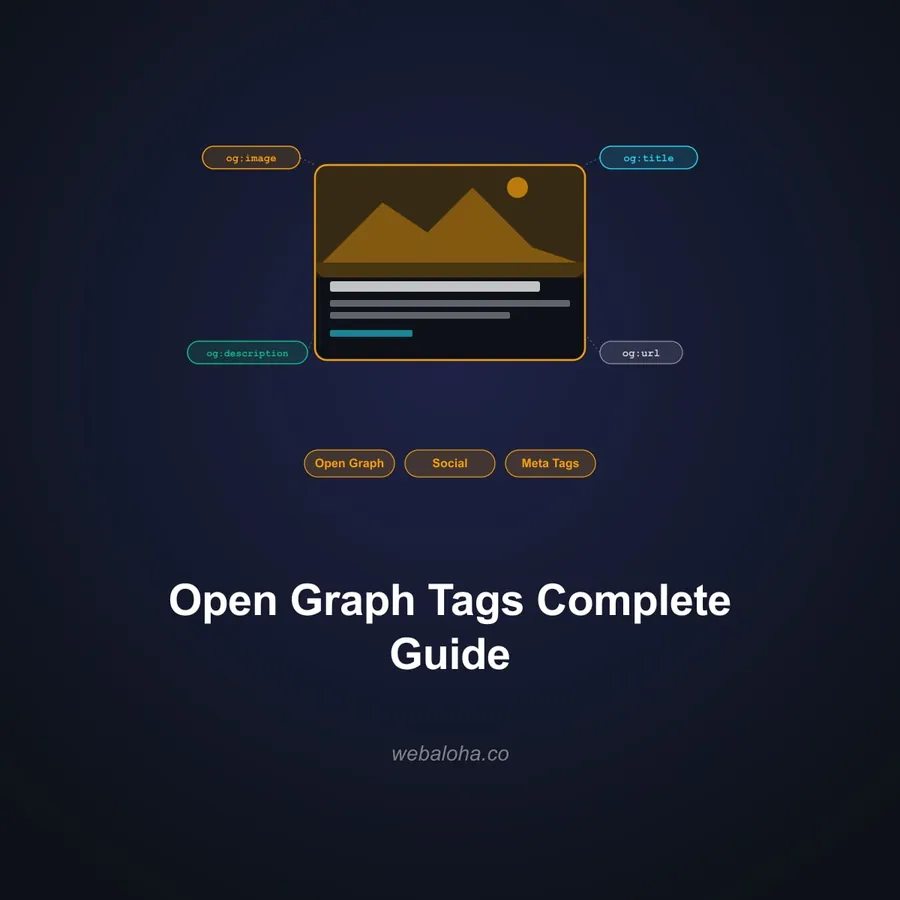

Does schema markup help with AI visibility?

Schema markup helps by giving machines clearer signals about what the page covers, who wrote it, and how it fits into your site. Google explicitly states that no special AI-only schema is required, but structured data should match visible content. Schema is best understood as a semantic clarity layer, not a ranking trick. It reduces ambiguity during retrieval, which is useful across multiple AI platforms. You can check yours with our schema markup validator.

Are AI crawlers different from traditional search crawlers?

Yes, and most platforms now operate multiple bots for different purposes. OpenAI uses OAI-SearchBot for search inclusion, GPTBot for training, and ChatGPT-User for user-triggered fetches. Perplexity separates PerplexityBot (indexing) from Perplexity-User (user fetches). Anthropic runs ClaudeBot, Claude-User, and Claude-SearchBot. Blocking the wrong bot can remove your site from AI search results without affecting training, or vice versa. Understanding which crawlers to allow is a core part of technical GEO.

Can I block AI training crawlers but still appear in AI search results?

In most cases, yes. Google’s Google-Extended token controls Gemini training and grounding without affecting Search inclusion. OpenAI confirms that blocking GPTBot while allowing OAI-SearchBot is a valid configuration. Anthropic separates ClaudeBot (training) from Claude-SearchBot (search indexing). The key is configuring your robots.txt and WAF rules per bot rather than treating AI access as a single on/off switch. Our robots.txt tester can help you verify your setup.

Which AI platform offers the best citation measurement for publishers?

Microsoft currently leads. Its AI Performance dashboard in Bing Webmaster Tools reports total citations, cited pages, and grounding queries across Copilot and Bing AI answers. ChatGPT Search adds utm_source=chatgpt.com to referral URLs, making traffic easy to segment in analytics. Google AI traffic is blended into Search Console’s Web reporting with no separate AI breakdown. Perplexity and Claude do not offer first-party citation dashboards for site owners.

Why does source selection matter for GEO?

Because GEO is not just about publishing good content. It is about becoming a source that AI systems trust enough to retrieve, verify, and cite. If you understand how different platforms pick sources, their crawlers, verification steps, and citation logic, you can build a strategy that works across multiple AI systems rather than optimizing blindly for one.

Should businesses optimize for one AI platform or for all of them?

Start with the shared fundamentals: retrieval-ready content, clean HTML, evidence-backed claims, strong entity consistency, and correct crawler access. These travel well across Google AI Overviews, ChatGPT Search, Perplexity, Microsoft Copilot, and Claude. Then account for platform-specific differences, like measurement through Bing AI Performance, referral tracking through ChatGPT’s utm parameter, or Google-Extended governance for Gemini.

Can Web Aloha help improve AI visibility across different platforms?

Yes. Web Aloha helps businesses improve AI visibility through GEO audits, content restructuring for passage-level retrieval, schema implementation, crawler access configuration, entity alignment, and cross-platform measurement setup. We work across Google, ChatGPT, Perplexity, Copilot, and Claude surfaces. If you want your pages to become easier for AI platforms to retrieve and cite, take a look at our GEO Services.