Duplicate content is not a Google penalty. You will not wake up one morning to find your site manually demoted because two URLs serve the same blog post.

But duplicate content still kills rankings. It splits link equity across variants, burns crawl budget on pages that do not matter, confuses Google about which URL to rank, and now increasingly muddies the waters for AI search engines that consolidate near-duplicate sources before deciding who to cite.

This is the pillar guide: what duplicate content actually is, where it comes from, how to detect it, and the five fixes you have in your toolkit. We will spend extra time on the two places duplicate content does the most invisible damage: ecommerce sites and AI search.

The Duplicate Content Penalty Myth

Google Search Central is explicit on this point. The official documentation on duplicate content states that duplicate content is generally not grounds for action on a site, and that Google does not recommend blocking duplicate content through robots.txt. The only time duplicate content triggers a manual action is when it is clearly deceptive in origin or intent, such as large-scale content scraping designed to manipulate rankings.

John Mueller has repeated this on record, on podcasts, at conferences, and in office hours. There is no duplicate content penalty. Ordinary duplicates (tracking parameters, www versus non-www, filter pages) trigger consolidation, not penalization.

Here is what actually happens:

- Google crawls multiple URLs with the same or very similar content

- It clusters them and picks one as the canonical version

- It shows only that canonical URL in search results

- Ranking signals (links, engagement, content freshness) are consolidated onto the canonical

The damage is not a penalty. The damage is:

- Split link equity. Backlinks to

/product?id=342do not fully consolidate to/blue-widget/without a clear canonical signal. - Wasted crawl budget. Googlebot spends its finite budget crawling filter combinations instead of your new content.

- Wrong URL in search results. Google may pick the ugly parameterized version to display, hurting click-through rate.

- Ranking instability. When signals are split, rankings fluctuate more than they should.

None of this is punishment. It is Google’s best-effort deduplication. Your job is to make its job easy.

Internal vs External Duplicate Content

Duplicate content falls into two categories.

Internal duplicates exist within a single domain. Most are accidental: CMS quirks, URL parameters, protocol variations, or category/tag overlaps.

External duplicates exist across domains. Some are intentional (syndication partnerships, press releases). Some are malicious (scrapers). Some are structural (manufacturer product descriptions used by thousands of retailers).

Internal duplicates are almost always your problem to fix. External duplicates depend on who controls the other domain and whether the copying was authorized.

The 14 Most Common Sources of Duplicate Content

| # | Source | Example | Typical Fix |

|---|---|---|---|

| 1 | HTTP vs HTTPS | http://site.com and https://site.com both resolve | Force HTTPS via 301 redirect |

| 2 | www vs non-www | www.site.com and site.com both resolve | 301 redirect to chosen version |

| 3 | Trailing slash inconsistency | /page and /page/ both return 200 | 301 redirect to chosen form |

| 4 | URL parameters (sorting, filtering) | /category?sort=price | Canonical to base URL |

| 5 | Session IDs in URLs | /product?sid=a83k2 | Canonical or cookie-based sessions |

| 6 | UTM and tracking tags | /blog/?utm_source=email | Self-referencing canonical on base |

| 7 | Faceted navigation | /shoes/?color=red&size=10&brand=nike | Canonical to base category, selective noindex |

| 8 | Pagination | /blog/page/2/ | Self-referencing canonical per page |

| 9 | Printer-friendly versions | /article/?print=1 | Canonical or noindex |

| 10 | Legacy AMP or mobile subdomains | m.site.com/page | Canonical to desktop URL |

| 11 | Language switcher without hreflang | /services and /en/services | hreflang + self-referencing canonicals |

| 12 | Staging/dev sites accidentally indexed | staging.site.com | noindex, basic auth, or block via robots |

| 13 | Category + tag URL overlap | /category/seo/ and /tag/seo/ list same posts | noindex tag archives or consolidate |

| 14 | Content syndication + scraping | Your article republished on Medium or stolen | Cross-domain canonical or DMCA |

The first six are trivial to fix with server configuration and a self-referencing canonical on every page. The middle section is where most real work happens. The bottom three require strategy, not just technique.

How to Detect Duplicate Content: A 5-Method Workflow

No single tool catches everything. Combine these five methods for full coverage.

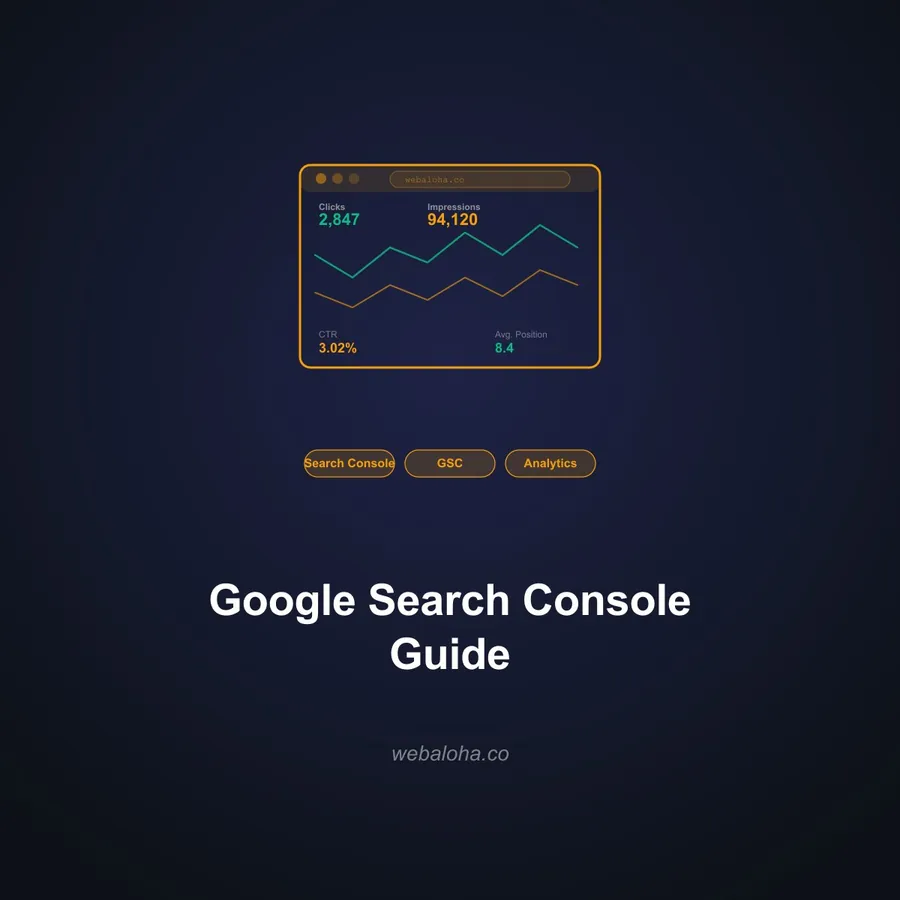

1. Google Search Console Pages Report

The single most authoritative source. Under Indexing > Pages, look for:

- Duplicate without user-selected canonical: pages Google grouped as duplicates without a clear canonical signal from you

- Duplicate, Google chose different canonical than user: your canonical is being overridden

- Alternate page with proper canonical tag: duplicates where your canonical is working correctly (this is fine)

Work through “Duplicate without user-selected canonical” first. Those are pages Google has already identified as problems. Our Google Search Console guide walks through the full workflow.

2. site: Search Operators

Quick spot checks. Run these in Google:

site:yourdomain.com "distinctive sentence from your page"

site:yourdomain.com inurl:?utm

site:yourdomain.com inurl:?sortIf more than one URL returns for your distinctive sentence, you have a duplicate. If parameter URLs appear in the index, your canonical or parameter handling is not working.

3. Crawler-Based Audits

Run a full site crawl with Screaming Frog, Sitebulb, or Ahrefs Site Audit. Look at:

- Near-duplicates cluster (similarity threshold 90%+)

- Pages with the same title tag

- Pages with the same H1

- Pages with the same meta description

Crawlers compare content hashes across thousands of URLs in minutes, something you cannot do manually. Our technical SEO audit guide covers how to structure a full audit.

4. On-Demand Two-URL Comparisons

When you suspect two specific URLs are duplicates but want a quick answer, paste both into a comparison tool. Our free Duplicate Content Checker returns a similarity score and highlights the overlapping blocks.

5. Canonical and Redirect Spot Checks

After implementing fixes, verify they stuck. Use the Canonical URL Checker to confirm canonical tags are present and point to live pages, and the Redirect Checker to confirm 301s chain cleanly to the final destination.

The 5 Fixes for Duplicate Content: Decision Matrix

You have five options. Picking the wrong one is how duplicate content problems become worse.

| Fix | What It Does | Use When | Avoid When |

|---|---|---|---|

| 301 Redirect | Permanently moves users and bots to the target URL | The duplicate URL should never be visited again (site migrations, URL structure changes, retired pages) | Users still need the duplicate URL accessible |

| Canonical Tag | Tells Google which URL to index; both remain accessible | Filter pages, tracking parameters, syndicated articles, language-relevant duplicates | The duplicate should be entirely removed or blocked |

| Noindex Meta Tag | Keeps page accessible to users but removes it from the index | Internal search results, thin filter pages, tag archives, thank-you pages | The page has any chance of ranking or earning traffic |

| Parameter Handling | Tells Google how to treat URL parameters at the domain level | Large sites with consistent parameter patterns that cannot be canonicalized individually | Small sites where canonicals are easier |

| Content Rewrite | Makes the pages genuinely different so neither is a duplicate | Thin category overlap, near-duplicate product descriptions from manufacturer feeds | Pure technical duplicates (protocols, parameters) |

301 Redirect

Use when the duplicate URL should die. Site migrations, permalink changes, retired products, domain consolidations. A 301 passes almost all link equity and removes the old URL from the index within days to weeks. It is the strongest signal you have.

Canonical Tag

Use when both URLs must stay live but only one should rank. This is the workhorse of duplicate content fixes for ecommerce and large content sites. We will not re-cover implementation details here: the canonical tags explained guide has the full syntax, platform guides, and common-mistakes breakdown.

Noindex

Use when a page must stay accessible (for users, for internal search, for checkout flow) but must not appear in search results. Critical detail: do not combine noindex with canonical pointing elsewhere. The signals contradict, and Google will ignore both.

URL Parameter Handling

Note: Google retired the URL Parameters tool in Search Console in 2022. Parameter handling is now implicit through canonicals, robots.txt, and Google’s own pattern detection. For large ecommerce sites, this means your canonical discipline must be strong because you no longer have a centralized override. Use robots.txt carefully to block parameter crawling only when you are sure those URLs have no value.

Content Rewrite

The nuclear option, and often the right one. If two pages are duplicates because they were written to target the same keyword with slightly different wording, consolidate them. Pick the stronger URL, merge the unique content from the weaker one, 301 the loser to the winner. This is how you fix keyword cannibalization, not just duplicate content.

Ecommerce: Where Duplicate Content Becomes a Crisis

For content sites, duplicate content is a nuisance. For ecommerce, it is existential. A mid-size store with 5,000 products can easily generate 500,000 crawlable URLs through faceted navigation alone. That is 100 URL variants per product, most of which serve the same content block.

Faceted Navigation

The single biggest source of ecommerce duplicates. Every combination of filter (color, size, brand, price range, material, rating) creates a new URL.

/shoes/womens/

/shoes/womens/?color=black

/shoes/womens/?color=black&size=8

/shoes/womens/?color=black&size=8&brand=nike

/shoes/womens/?brand=nike&color=black&size=8The last two are identical in content but have different URLs because parameter order differs. Your canonical discipline needs to normalize parameter order, or Google will cluster them as separate duplicates.

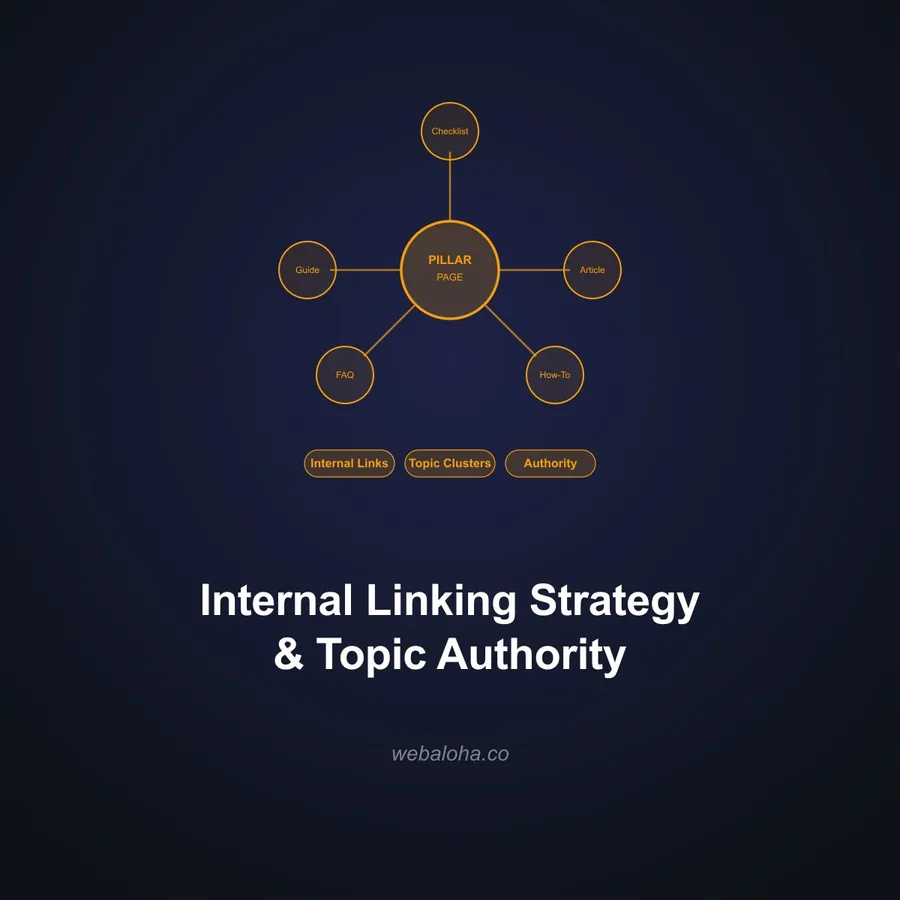

The pattern that works:

- Base category page has a self-referencing canonical

- All filter-combination URLs canonical to the base category page

- Filter combinations with real search demand (like

/shoes/womens/black/) get promoted to dedicated URLs with their own canonical and unique content - Deep filter stacks (3+ filters) are blocked from crawling via robots.txt to protect crawl budget

Product Variants

Color and size variants generate URL bloat. A shirt in 5 colors and 4 sizes is 20 variant URLs that share 95% of their content.

Shopify handles this well by default: variant selectors update the URL via parameters, and the main product page has a canonical that variants respect. WooCommerce is more fragile. Our WooCommerce website development work frequently involves fixing default canonical behavior, especially on variable products, where plugins can inject multiple canonical tags or generate indexable variant URLs.

The decision:

- Variants with genuinely unique content (different descriptions, different images, different reviews) get their own URLs with self-referencing canonicals

- Variants that only differ in a selector (same description, same everything else) canonical to the base product

Category + Tag Overlap

Classic WordPress and many WooCommerce stores expose two URLs listing the same products: /product-category/shoes/ and /product-tag/shoes/. Both show the same listing. Both can accumulate rankings. Both split link equity.

Pick one. Noindex the other. Usually category wins because it fits your IA better.

Manufacturer Product Descriptions

Ten thousand retailers sell the same pair of headphones. All ten thousand copy the manufacturer’s description verbatim. Google now has 10,000 identical product pages and has to pick one to rank. It is almost never the smaller retailer.

This is not fixable with a canonical. It is fixable with content. Every product page needs at least 100-200 words of genuinely unique content: your perspective, use cases, pairing suggestions, FAQ answers, customer pull-quotes. The product pages that win in competitive ecommerce niches do this at scale.

Content Syndication: Do It Without Destroying Your Original

Syndication is a legitimate growth channel. Guest posts, Medium cross-posts, trade publication republishing. All three help distribution. All three can cannibalize your original if you do not manage them.

The rules:

-

Cross-domain canonical on the syndicated copy. The publisher adds

<link rel="canonical" href="https://yoursite.com/original/" />to their copy. This is non-negotiable. If they refuse, do not syndicate. -

Wait for your original to index before republishing. Google needs to see the original first. A 2-week buffer is safe; 3-7 days is the minimum if you can verify indexing through Search Console’s URL Inspection tool.

-

Do not syndicate verbatim to every possible outlet. Rewrite meaningfully for each venue, or pick a single syndication partner. The internet does not need ten identical copies.

-

Medium is a special case. Use Medium’s “Import” feature, which automatically sets a canonical back to your original. Do not paste the article directly.

-

Guest posts are not syndication. A guest post is original content you wrote for another site, not republished from yours. It has its own canonical on the host site.

Duplicate Content and AI Search: The New Dimension

Traditional SEO treats duplicates as a deduplication problem. AI search treats duplicates as an authority problem, and the consequences are more severe.

When an LLM-powered search engine (Perplexity, ChatGPT search, Google AI Overviews, Claude’s web search) decides which source to cite, it performs semantic deduplication. Near-duplicate content gets clustered into a single concept, and one source gets picked to represent that concept. The others vanish from consideration entirely. There is no equivalent of “ranking #2” in AI citation; you are cited or you are not.

Three implications for your content strategy:

-

Near-duplicate content loses harder in AI search than in traditional SEO. A page that would rank on page 2 of Google may get zero AI citations if the model decides your page is a less canonical version of a competitor’s.

-

Entity authority consolidates to one URL. If your content exists at three URLs across your domain and syndication partners, the model picks one. If the winner is your syndication partner’s copy, you lose the entity association even though you wrote the content.

-

Unique angle beats volume. Ten pages covering the same topic from slightly different angles used to be a long-tail strategy. In AI search, it is a liability. One definitive page with distinctive structure, original data, and a clear canonical URL outperforms ten semi-duplicate variations.

This is why our content optimization for AI citations framework starts with canonical discipline and unique-angle validation before any optimization work.

Duplicate Content Prevention Checklist

Fixing existing duplicates is the first project. Preventing new ones is the ongoing discipline.

- Force HTTPS site-wide via 301 redirect; add HSTS

- Pick www or non-www and 301 the other

- Pick trailing-slash or non-trailing-slash URLs and enforce it

- Self-referencing canonical on every indexable page

- Sitemap lists only canonical URLs (validate with our Sitemap Checker)

- Internal links point to canonical URLs, never to parameter variants

- Faceted navigation has a documented canonical strategy

- Parameter-heavy URLs (UTMs, sorting, session IDs) canonical to base

- Pagination uses self-referencing canonicals, not canonical-to-page-1

- Tag archives are noindexed or consolidated with categories

- Staging and dev environments are noindexed and basic-auth protected

- Syndicated content requires cross-domain canonical in writing

- Product pages have unique content beyond manufacturer descriptions

- Monthly review of Search Console’s Pages report for new duplicate patterns

A clean canonical strategy plus disciplined URL patterns prevents 90% of duplicate content problems before they start. The remaining 10% is syndication, scrapers, and edge cases you handle case by case.

The Duplicate Content Bottom Line

Duplicate content is not a penalty. It is friction. Friction that splits your link equity, drains your crawl budget, muddles your AI citations, and makes every other SEO investment less efficient.

You do not need to eliminate every duplicate on your site. You need to make sure Google and AI systems can always tell which URL is the canonical version for any given piece of content. That is a combination of technical hygiene (redirects, canonicals, noindex), strategic decisions (consolidate or differentiate), and prevention (IA, URL patterns, syndication rules).

If your site is more than a few hundred URLs and you have not audited for duplicates in the last year, there is almost certainly hidden damage. Start with the Search Console Pages report and our SEO services team can handle the rest.

Duplicate Content FAQ

Is there a duplicate content penalty in Google?

No. Google Search Central explicitly states that duplicate content is not grounds for manual action unless it is being used to deceive users or manipulate rankings. What actually happens with ordinary duplicate content is consolidation: Google picks one version to index, may not rank the others, and splits ranking signals across variants until it decides. That is not a penalty, but it still damages rankings and wastes crawl budget.

What is duplicate content in SEO?

Duplicate content is substantive blocks of content that appear at multiple URLs, either within a single domain (internal duplicates) or across different domains (external duplicates). Examples include HTTP vs HTTPS versions of a page, URLs with tracking parameters, faceted category pages in ecommerce, near-identical product descriptions copied from manufacturer feeds, and content republished on Medium or syndication partners.

How do I find duplicate content on my site?

Use Google Search Console’s Pages (Indexing) report to find pages flagged as ‘Duplicate without user-selected canonical’ or ‘Duplicate, Google chose different canonical than user.’ Supplement that with a crawl using Screaming Frog or Ahrefs Site Audit to compare content hashes, and run spot checks with a site: search in Google combined with distinctive sentences in quotes.

When should I use a canonical tag vs a 301 redirect vs noindex?

Use a 301 redirect when the duplicate URL should never be accessed again. Use a canonical tag when both URLs must remain live for users but only one should rank (filtered ecommerce pages, tracking parameters, syndicated articles). Use noindex when the page should stay accessible but must not appear in search results at all, like thin filter combinations or internal search result pages.

How does duplicate content affect ecommerce sites?

Ecommerce sites are the single biggest source of duplicate content issues. Faceted navigation, product variants, category-tag overlap, and manufacturer-supplied descriptions generate thousands of near-duplicate URLs. Without disciplined canonical strategy and parameter handling, crawl budget gets wasted on filter combinations while real product pages are crawled too rarely to rank.

Does duplicate content hurt AI search and LLM citations?

Yes, more than traditional SEO in some ways. Large language models consolidate near-duplicate content to a single canonical source when deciding what to cite. If your article exists on three URLs, or has been republished across multiple domains without canonical attribution, the model may cite a competitor or syndication partner instead of you. Distinctive, unique content at a clear canonical URL is the foundation of AI citation authority.

What should I do about scraped content stolen from my site?

First, confirm your original is indexed and has a self-referencing canonical. Most scrapers do not outrank the original if your authority and crawl frequency are higher. If a scraper does outrank you, file a DMCA notice through Google’s Copyright Removal tool. For ongoing protection, publish new content to Search Console’s URL Inspection tool immediately after publishing so Google indexes the original first.