If search engines cannot find your pages, nothing else you do for SEO matters. A sitemap is the most direct way to make sure they can.

This guide explains everything you need to know: what sitemaps are, why they matter, how to build and submit one, and the mistakes that silently hurt your crawl coverage.

What Is a Sitemap?

A sitemap is a file or page that lists all the URLs on your website. It acts as a roadmap for search engines, showing them what exists on your site and where to find it.

There are two main types: XML sitemaps for search engines and HTML sitemaps for human visitors. They serve different purposes and often both exist on the same site.

XML Sitemap

An XML sitemap is a structured, machine-readable file, typically located at yourdomain.com/sitemap.xml. It lists your important URLs along with optional metadata like the last modified date.

Here is what a minimal XML sitemap looks like:

<?xml version="1.0" encoding="UTF-8"?>

<urlset xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<url>

<loc>https://example.com/</loc>

<lastmod>2025-07-01</lastmod>

</url>

<url>

<loc>https://example.com/services/</loc>

<lastmod>2025-06-15</lastmod>

</url>

<url>

<loc>https://example.com/our-first-post/</loc>

<lastmod>2025-07-10</lastmod>

</url>

</urlset>This is what Google’s crawler reads when it processes your sitemap.

HTML Sitemap

An HTML sitemap is a regular page on your website, usually linked from the footer, that lists all major sections and pages. It helps human visitors find content on large or complex sites.

HTML sitemaps have limited SEO value compared to XML sitemaps, but they do pass some internal link equity and help users navigate. For most small business sites, an HTML sitemap is optional.

XML vs HTML Sitemaps at a Glance

| Feature | XML Sitemap | HTML Sitemap |

|---|---|---|

| Primary audience | Search engine crawlers | Human visitors |

| Format | Machine-readable XML file | Standard HTML web page |

| SEO impact | High: speeds up discovery and indexing | Low to moderate: improves internal links |

| Submission | Submitted to Search Console | Not submitted, linked normally |

| Required? | Strongly recommended | Optional |

| Best for | All sites with 5+ pages | Large sites with complex navigation |

Why Sitemaps Matter for SEO

Search engines discover pages through two primary methods: following links and reading sitemaps. Links are the more powerful discovery signal, but sitemaps fill the gaps that links miss.

Crawl Budget Efficiency

Google allocates a crawl budget to every website based on its size, authority, and server health. That budget is the number of pages Google will crawl within a given time frame. Wasting it on thin pages, duplicates, or pagination means your important content gets crawled less often.

A well-structured sitemap tells Google exactly which pages are worth its time. Combined with a solid robots.txt file that blocks irrelevant sections, you direct crawl resources where they matter most. Page performance also plays a role: sites with strong Core Web Vitals scores tend to receive more generous crawl budgets from Google.

Faster Indexing for New Content

Without a sitemap, Google discovers new pages by following links. If a page has no incoming links, it may never be found. If it only has one internal link buried three levels deep, it could take weeks to be discovered.

Submitting a sitemap through Google Search Console puts new URLs directly on Google’s radar. For time-sensitive content like news articles or product launches, this can mean the difference between being indexed in hours vs. weeks.

Orphaned Page Discovery

Orphaned pages are pages on your site with no internal links pointing to them. They are invisible to crawlers that rely on link-following alone. A sitemap explicitly lists them, giving crawlers a path to reach content that would otherwise never be indexed.

This is especially relevant after site migrations or redesigns, where pages often get orphaned unintentionally. A solid technical SEO audit will surface these issues.

Metadata Communication

Beyond URLs, XML sitemaps can communicate useful signals: when a page was last modified (<lastmod>), what type of content it contains (via sitemap extensions for images, video, or news), and how pages are organized relative to each other via sitemap index files.

Types of Sitemaps

The standard XML sitemap handles most use cases, but Google supports specialized sitemap formats for richer content types.

| Sitemap Type | What It Covers | Best For | Special Tags |

|---|---|---|---|

| XML Sitemap | Standard web pages | All websites | loc, lastmod |

| Image Sitemap | Images embedded in pages | Photography, e-commerce | image:loc, image:caption |

| Video Sitemap | Hosted video content | Media sites, courses | video:title, video:duration |

| News Sitemap | Recent news articles (48 hrs) | News publishers | news:publication, news:title |

| Sitemap Index | References to multiple sitemaps | Large sites (50K+ URLs) | sitemap, loc, lastmod |

Image Sitemaps

Google cannot always discover all images on a page through crawling alone, especially when images are loaded via JavaScript or CSS. An image sitemap extension provides that metadata directly.

This matters for image search traffic, which can drive significant volume for product-heavy or visual content sites. Include image title, caption, license information, and geo location data where relevant.

News Sitemaps

News sitemaps are specifically designed for Google News inclusion. They only list articles published within the past 48 hours and require the news: namespace extension. If you run a news publication or frequently publish time-sensitive content, this is worth implementing.

Sitemap Index Files

When your site has more than 50,000 URLs, or when you want to organize your sitemap by content type for easier monitoring, you use a sitemap index file. The index file itself is an XML file that simply lists the locations of your individual sitemaps:

<?xml version="1.0" encoding="UTF-8"?>

<sitemapindex xmlns="http://www.sitemaps.org/schemas/sitemap/0.9">

<sitemap>

<loc>https://example.com/sitemap-pages.xml</loc>

<lastmod>2025-07-01</lastmod>

</sitemap>

<sitemap>

<loc>https://example.com/sitemap-blog.xml</loc>

<lastmod>2025-07-19</lastmod>

</sitemap>

<sitemap>

<loc>https://example.com/sitemap-products.xml</loc>

<lastmod>2025-07-20</lastmod>

</sitemap>

</sitemapindex>You submit the index file URL to Google Search Console, and it automatically processes all the referenced child sitemaps.

XML Sitemap Tags Explained

Understanding what each tag does helps you build a sitemap that communicates the right signals.

| Tag | Required? | Purpose | Google Uses It? |

|---|---|---|---|

| <urlset> | Yes | Root element, declares the namespace | Yes (required) |

| <url> | Yes | Container for each page entry | Yes (required) |

| <loc> | Yes | Absolute URL of the page | Yes |

| <lastmod> | No | Date the page was last modified (ISO 8601) | Yes (when accurate) |

| <changefreq> | No | How often the page changes | No (ignored by Google) |

| <priority> | No | Relative importance (0.0 to 1.0) | No (ignored by Google) |

Two important takeaways from this table: the <changefreq> and <priority> tags are ignored by Google. Setting every page to priority="1.0" does nothing. Focus your effort on accurate <lastmod> dates instead, as Google does use those to determine which pages to recrawl.

Always use absolute URLs in <loc> tags (starting with https://), encode special characters properly (ampersands as &), and use UTF-8 encoding throughout.

How to Create a Sitemap by Platform

WordPress

WordPress has no built-in sitemap by default, but two popular plugins handle it well:

Yoast SEO: Automatically generates a sitemap index at yourdomain.com/sitemap_index.xml with separate sitemaps for posts, pages, categories, and authors. Configure which post types to include under SEO Settings, then re-check whenever you add new content types.

Rank Math: Similar functionality with slightly more granular control. Both are solid options. You do not need both.

After installing either plugin, verify the sitemap exists by visiting the URL directly in your browser. You should see valid XML. Then submit the URL to Google Search Console.

Shopify

Shopify automatically generates a sitemap at yourdomain.com/sitemap.xml. It includes all published products, collections, blogs, and pages. You cannot directly edit it, but you control what appears by publishing or hiding content in the Shopify admin.

Submit the auto-generated sitemap to Google Search Console and Bing Webmaster Tools. Shopify handles the regeneration automatically as you add products or pages.

Squarespace and Wix

Both platforms automatically generate and maintain XML sitemaps. Squarespace places its sitemap at yourdomain.com/sitemap.xml. Wix generates one at the same URL. Neither requires manual intervention, though submitting the URL to Search Console is still your responsibility.

Custom or Framework-Based Sites

For sites built with custom code, static site generators (like Astro, Next.js, or Hugo), or headless CMS setups, you have three options:

-

Framework plugins: Most static site frameworks have sitemap plugins. Astro has

@astrojs/sitemap. Next.js hasnext-sitemap. These auto-generate sitemaps at build time. -

Dynamic generation: Build a server-side route that queries your database or CMS at request time and outputs valid XML. This ensures the sitemap always reflects the current state of your site.

-

Third-party generators: Tools like Screaming Frog, XML-Sitemaps.com, or Sitebulb can crawl your site and generate a sitemap file. Best for smaller static sites where automation is not needed. Our free Sitemap Generator is another option for quickly producing a clean, submit-ready XML sitemap.

For custom-built sites, our team at Web Aloha typically implements dynamic sitemap generation so your sitemap stays current automatically, regardless of how often content changes.

Static vs Dynamic Sitemaps

A static sitemap is a file you manually create and upload. It works for sites that rarely change, but quickly becomes outdated.

A dynamic sitemap is generated programmatically, either at build time or on each request. It reflects the current state of your site without any manual effort.

Choose static if your site has fewer than 20 pages and changes less than once a month.

Choose dynamic if your site has a blog, product catalog, or any content that changes regularly. The operational overhead of keeping a manual sitemap accurate is rarely worth it.

How to Submit Your Sitemap to Google Search Console

Getting a sitemap generated is only half the work. You need to tell Google where it is.

Step-by-Step: Google Search Console

- Sign in to Google Search Console

- Select your property (verify ownership first if you have not already)

- In the left sidebar, click Indexing, then Sitemaps

- In the “Add a new sitemap” field, enter your sitemap URL (just the path, e.g.,

sitemap.xml) - Click Submit

Google will begin processing your sitemap. Within a few days, the Sitemaps report will show how many URLs were submitted vs. how many were indexed. That gap is worth investigating. Our Google Search Console guide covers how to interpret these reports in detail.

Step-by-Step: Bing Webmaster Tools

- Sign in to Bing Webmaster Tools

- Select your site

- Go to Sitemaps in the left menu

- Click Submit sitemap and enter your full sitemap URL

- Click Submit

Bing powers search results across Bing, DuckDuckGo, and several other engines. It is worth the two-minute submission. Many site owners skip this and leave meaningful traffic on the table.

Also: Add Your Sitemap to robots.txt

Reference your sitemap from your robots.txt file. This allows any crawler, not just Google and Bing, to discover your sitemap automatically:

User-agent: *

Disallow: /admin/

Sitemap: https://yourdomain.com/sitemap.xmlUse our free Robots.txt Generator to create a properly formatted robots.txt file. For a deeper look at how robots.txt interacts with sitemaps and AI crawlers, see our robots.txt guide.

How Search Engines Use Your Sitemap

Understanding the flow from sitemap submission to page indexing helps you troubleshoot when something is not working.

| Stage | What Happens | Time Frame |

|---|---|---|

| 1. Discovery | Google finds your sitemap via Search Console submission or robots.txt | Minutes to hours |

| 2. Fetch | Googlebot downloads the sitemap file and parses the URL list | Hours to 1-2 days |

| 3. Queue | URLs are added to the crawl queue, prioritized by signals like lastmod and page authority | 1-3 days |

| 4. Crawl | Googlebot visits each URL, processes the HTML, evaluates content and signals | Days to weeks |

| 5. Index Decision | Google decides whether to add the page to its index based on quality, canonicalization, and directives | Days to weeks |

| 6. Ranking | Indexed pages compete in search results based on relevance, authority, and quality signals | Weeks to months |

A sitemap helps most with stages 1-3. It speeds up discovery and queuing. It does not influence the indexing decision or ranking. For that, you need strong content and solid on-page SEO fundamentals.

Sitemap Best Practices

Only Include Indexable URLs

This is the most important rule. Your sitemap should only contain pages you want indexed. Exclude:

- Pages with a

noindexmeta tag - Pages blocked by robots.txt

- Redirected URLs (301s and 302s)

- Pages returning 4xx or 5xx status codes

- Thin, duplicate, or session-parameterized URLs

- Admin, login, and thank-you pages

Including non-indexable pages sends a conflicting signal. It also dilutes the sitemap quality score that Google builds for your domain over time.

Keep Sitemap Files Under Limits

Each sitemap file: maximum 50,000 URLs and 50MB uncompressed. Exceeding either limit means Google may not process the entire file.

For large sites, use a sitemap index with segmented child sitemaps. Segment by content type (blog, products, landing pages) rather than arbitrarily splitting alphabetically. This makes it easier to diagnose indexing issues for specific content sections.

Use Accurate lastmod Dates

If you use <lastmod>, make sure it reflects a real, meaningful content update. Do not update it just to nudge Google into recrawling a page. Google learns over time if your <lastmod> values are trustworthy. If they are frequently inaccurate, Google ignores them entirely.

Good CMS integrations and dynamic sitemap scripts pull the last-modified date directly from the database record, keeping it accurate automatically.

Reflect the Canonical URL

Every URL in your sitemap should be the canonical version of that page. If you use canonical tags to point variants to a primary URL, only the primary URL should appear in the sitemap. Including non-canonical URLs contradicts your canonical directives.

Compress Large Sitemaps

Sitemap files can be gzip-compressed to reduce file size. The compressed limit is still 50MB. Most web servers and CMS plugins handle compression automatically. Verify your sitemap is accessible by visiting the URL directly in a browser.

Common Sitemap Mistakes and How to Fix Them

Even well-intentioned sitemaps often contain problems that reduce their effectiveness. A technical SEO audit will typically surface these.

Including Redirect URLs

URLs that redirect to another page should never appear in the sitemap. Include the final destination URL instead. Submitting redirect URLs wastes crawl budget and confuses crawlers.

Fix: Audit your sitemap with a crawler tool like Screaming Frog. Filter for non-200 status codes. Remove any URLs that redirect, return errors, or are no longer live.

Inconsistent URL Formats

Mixing https:// and http://, including and excluding trailing slashes, or using both www and non-www versions creates multiple entries for what should be the same page.

Fix: Standardize on one URL format across your entire site. Use the exact same format in your sitemap, canonical tags, internal links, and Google Search Console property. Use our XML Sitemap Validator to check for format consistency.

Forgetting to Update After Site Changes

A stale sitemap that lists old URLs or misses new pages is actively harmful. When you migrate pages, launch new content, or retire old sections, the sitemap must be updated.

Fix: Use a dynamic sitemap generated by your CMS or framework. If manual management is unavoidable, schedule a monthly audit to compare your sitemap against your actual page inventory.

Not Submitting to Search Console

Placing your sitemap URL in robots.txt is good practice, but it is not a substitute for Search Console submission. Search Console gives you visibility into sitemap errors, coverage reports, and indexing status. Without it, you are flying blind.

Fix: Submit your sitemap through Google Search Console and Bing Webmaster Tools. Review the coverage reports monthly.

Setting Every Page to Priority 1.0

This is a widespread but harmless mistake, since Google ignores the <priority> tag anyway. But it reveals a misunderstanding: if every page is “highest priority,” no prioritization has occurred. Focus on keeping your sitemap clean instead.

When You Might NOT Need a Sitemap

Google’s own documentation says sitemaps provide the most value for: large sites, sites with rich media (video, images), new sites without many external links, and sites with deep content hierarchies.

For a simple 3-5 page brochure site with strong internal linking and existing backlinks, a sitemap is unlikely to make a meaningful difference. Google will find those pages through links alone.

That said, a sitemap costs almost nothing to add, and the upside of faster indexing is real. There is rarely a good reason not to have one. The SEO for small businesses fundamentals we recommend include a sitemap from day one.

Monitoring Sitemap Health Over Time

Submitting your sitemap is not a one-time task. Regular monitoring catches problems before they affect your rankings.

Google Search Console Coverage Report

The Coverage report shows: how many URLs were submitted in your sitemap, how many are actually indexed, and which pages Google excluded and why. Common exclusion reasons include:

- “Crawled, currently not indexed” (Google crawled but chose not to index)

- “Duplicate, submitted URL not selected as canonical” (canonical tag conflict)

- “Blocked by robots.txt” (contradictory instructions)

- “Redirect error” (a redirect URL was included)

Review this report monthly. Any significant gap between submitted and indexed URLs deserves investigation. Our Google Search Console guide explains how to interpret each coverage status.

Track Index Coverage with a Checker

Use our free Google Index Checker to verify that your most important pages are actually indexed. Paste in a list of your priority URLs and see which ones Google has in its index.

Validate Sitemap Syntax Regularly

XML syntax errors in your sitemap file can prevent Google from parsing it entirely. Use our XML Sitemap Validator to confirm your sitemap is well-formed before and after any significant site changes.

Watch for Sitemap Fetch Errors in Search Console

If Google cannot fetch your sitemap file (due to a server error, authentication requirement, or firewall block), it will show a fetch error in the Sitemaps report. Set up Search Console email alerts to be notified when these occur.

Sitemaps in the Context of Your Overall SEO Strategy

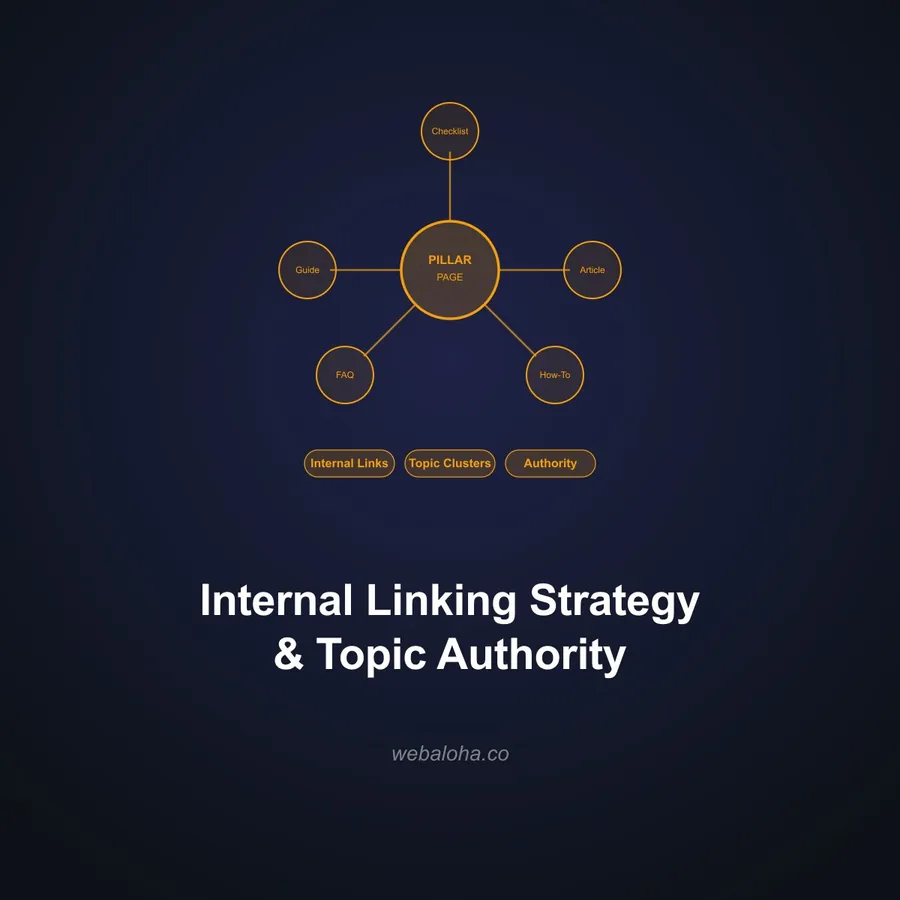

A sitemap is one component of a broader technical foundation. It works alongside your robots.txt configuration, canonical tag implementation, internal linking structure, Core Web Vitals performance, and structured data markup.

Sitemaps help with discovery. Rankings come from quality signals: content relevance, E-E-A-T authority, page experience, and backlinks.

For businesses wondering whether SEO is worth the investment: the technical foundation, including a properly maintained sitemap, is the prerequisite that makes everything else work. You can write excellent content and build strong links, but if Google cannot efficiently crawl and index your site, those efforts are limited.

If you are unsure whether your site has sitemap issues, our SEO services include a full technical audit as part of every engagement. We check sitemap health, coverage gaps, canonical conflicts, and crawl efficiency as part of the baseline setup.